Kowl (previously known as Kafka Owl) is a web application that helps you to explore messages in your Apache Kafka cluster and get better insights on what is actually happening in your Kafka cluster in the most comfortable way.

Written mostly in Typescript and Go, Kowl provides the following features :

- Message viewer: Explore your topics’ messages in our message viewer through ad-hoc queries and dynamic filters. Find any message you want using JavaScript functions to filter messages. Supported encodings are: JSON, Avro, XML, Text and Binary (hex view). The used enconding is recognized automatically.

- Consumer groups: List all your active consumer groups along with their active group offsets. You can view a visualization of group lags either by topic (sum of all partition lags), single partitions or the sum of all partition lags (group lag)

- Topic overview: Browse through the list of your Kafka topics, check their configuration, space usage, list all consumers who consume a single topic or watch partition details (such as low and high water marks, message count, …), embed topic documentation from a git repository and more.

- Cluster overview: List available brokers, their space usage, rack id and other information to get a high level overview of your brokers in your cluster.

#1. Getting started

The easiest way to get started with Kowl is to use Docker. The first thing that you need to do is clone the Github project.

git clone https://github.com/cloudhut/kowl.git

Once you have cloned the project, go to kowl/docs/local path. There you will find 2 files – docker-compose.yaml and config.yaml.

version: '2.1'

services:

zoo1:

image: zookeeper:3.4.9

hostname: zoo1

ports:

- "2181:2181"

environment:

ZOO_MY_ID: 1

ZOO_PORT: 2181

ZOO_SERVERS: server.1=zoo1:2888:3888

volumes:

- ./zk-single-kafka-single/zoo1/data:/data

- ./zk-single-kafka-single/zoo1/datalog:/datalog

kafka1:

image: confluentinc/cp-kafka:5.5.0

hostname: kafka1

ports:

- "9092:9092"

environment:

KAFKA_ADVERTISED_LISTENERS: LISTENER_DOCKER_INTERNAL://kafka1:19092,LISTENER_DOCKER_EXTERNAL://${DOCKER_HOST_IP:-127.0.0.1}:9092

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: LISTENER_DOCKER_INTERNAL:PLAINTEXT,LISTENER_DOCKER_EXTERNAL:PLAINTEXT

KAFKA_INTER_BROKER_LISTENER_NAME: LISTENER_DOCKER_INTERNAL

KAFKA_ZOOKEEPER_CONNECT: "zoo1:2181"

KAFKA_BROKER_ID: 1

KAFKA_LOG4J_LOGGERS: "kafka.controller=INFO,kafka.producer.async.DefaultEventHandler=INFO,state.change.logger=INFO"

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

volumes:

- ./zk-single-kafka-single/kafka1/data:/var/lib/kafka/data

depends_on:

- zoo1

kowl:

image: quay.io/cloudhut/kowl:v1.2.1

restart: on-failure

hostname: kowl

volumes:

- ./config.yaml:/etc/kowl/config.yaml

ports:

- "8080:8080"

entrypoint: ./kowl --config.filepath=/etc/kowl/config.yaml

depends_on:

- kafka1

The docker-compose file provides the configuration of Kafka, Zookeeper and Kowl. The separate config.yaml file provides the configuration for Kowl to read data from Kafka broker.

kafka:

brokers:

- kafka1:19092

# server:

# listenPort: 8080

#2. Run Kowl

To run kowl, just run docker-compose up. To verify if the containers are running, run docker ps -a.

$ sudo docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 761f71938472 quay.io/cloudhut/kowl:v1.2.1 "./kowl --config.fil…" 50 minutes ago Up 41 minutes 0.0.0.0:8080->8080/tcp local_kowl_1 87c548c71137 confluentinc/cp-kafka:5.5.0 "/etc/confluent/dock…" 50 minutes ago Up 41 minutes 0.0.0.0:9092->9092/tcp local_kafka1_1 528f7d6e238a zookeeper:3.4.9 "/docker-entrypoint.…" 50 minutes ago Up 41 minutes 2888/tcp, 0.0.0.0:2181->2181/tcp, 3888/tcp local_zoo1_1

You should also see the below messages when you run docker-compose. This basically verifies that Kowl was able to connect to your Kafka broker.

kowl_1 | {"level":"info","ts":"2020-12-15T06:30:04.257Z","msg":"connection to all brokers healthy","brokers":1}

To verify if Kowl is running, go to http://localhost:8080.

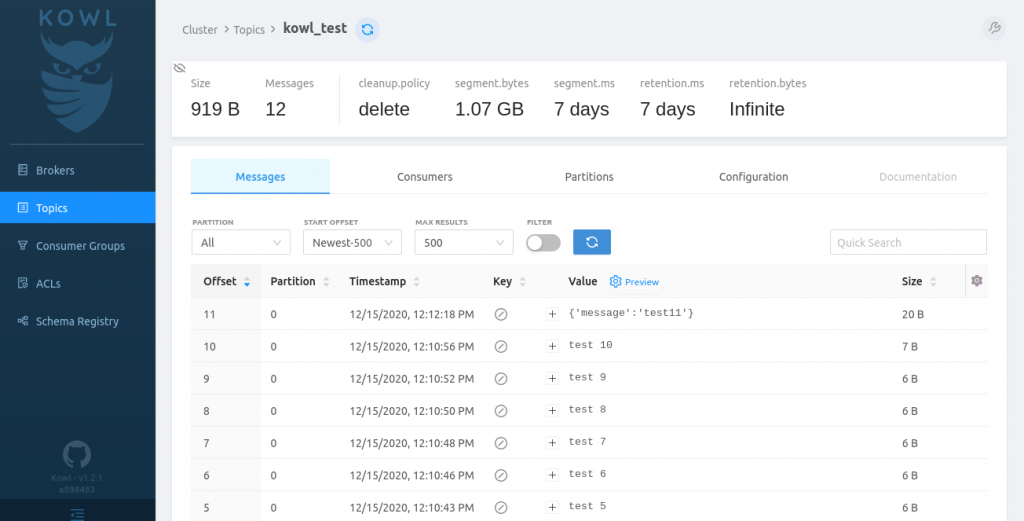

Once you create a topic and push some messages into the topic, you should see something like below.

The UI will give you details like the Offset, Partition, Timestamp of when the message was received in the topic, Key, Value, the actual message and the message size.

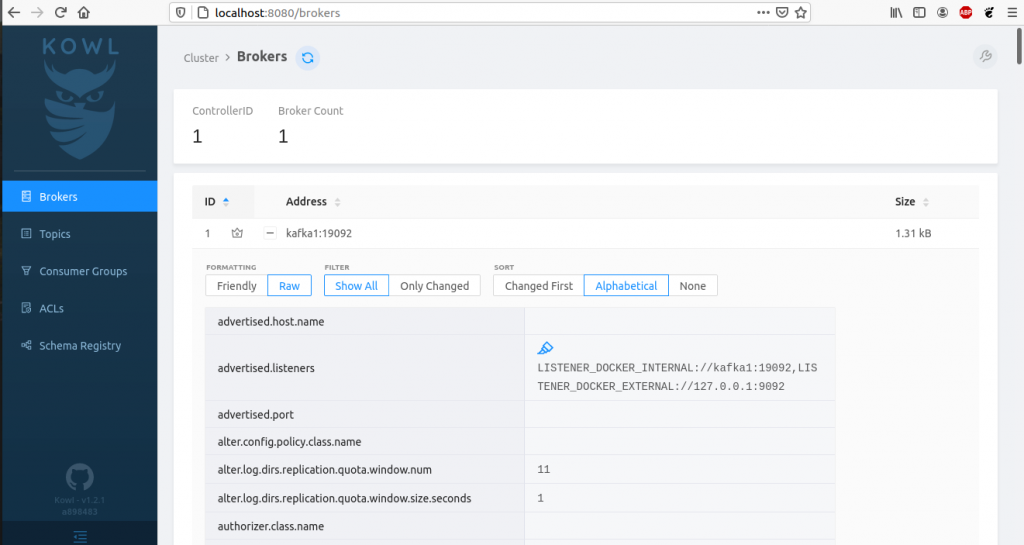

You can also check out the Broker details running in your Kafka cluster.

That’s it for this tutorial. Stay tuned for more.