Burrow is a monitoring companion for Apache Kafka that provides consumer lag checking as a service without the need for specifying thresholds. It monitors committed offsets for all consumers and calculates the status of those consumers on demand. An HTTP endpoint is provided to request status on demand, as well as provide other Kafka cluster information. There are also configurable notifiers that can send status out via email or HTTP calls to another service.

Features :

- NO THRESHOLDS! Groups are evaluated over a sliding window.

- Multiple Kafka Cluster support

- Automatically monitors all consumers using Kafka-committed offsets

- Configurable support for Zookeeper-committed offsets

- Configurable support for Storm-committed offsets

- HTTP endpoint for consumer group status, as well as broker and consumer information

- Configurable emailer for sending alerts for specific groups

- Configurable HTTP client for sending alerts to another system for all groups

You can quickly get started with Burrow with it’s Docker image.

#1. Download Burrow

The first thing that you need to do is clone the git project.

$ git clone https://github.com/linkedin/Burrow.git

Once you have cloned it, you can check out the docker-compose.yml file. Make sure to add the correct path of where your Burrow directory is located. Based on the docker-compose file, a Kafka/Zookeeper image would also get downloaded along with Burrow.

Burrow would run on port 8000 and zookeeper/kafka would run on port 2181 & 9092 respectively.

version: "2"

services:

burrow:

build: .

volumes:

- /apps/Burrow/Burrow/docker-config:/etc/burrow/

- /apps/Burrow/Burrow/tmp:/var/tmp/burrow

ports:

- 8000:8000

depends_on:

- zookeeper

- kafka

restart: always

zookeeper:

image: wurstmeister/zookeeper

ports:

- 2181:2181

kafka:

image: wurstmeister/kafka

ports:

- 9092:9092

environment:

KAFKA_ZOOKEEPER_CONNECT: zookeeper:2181/local

KAFKA_ADVERTISED_HOST_NAME: kafka

KAFKA_ADVERTISED_PORT: 9092

KAFKA_CREATE_TOPICS: "test-topic:2:1,test-topic2:1:1,test-topic3:1:1"

The docker-config would also have a burrow.toml file which basically is the configuration for Burrow to read.

[zookeeper] servers=[ "zookeeper:2181" ] timeout=6 root-path="/burrow" [cluster.local] class-name="kafka" servers=[ "kafka:9092" ] topic-refresh=60 offset-refresh=30 [consumer.local] class-name="kafka" cluster="local" servers=[ "kafka:9092" ] group-denylist="^(console-consumer-|python-kafka-consumer-).*$" group-allowlist="" [consumer.local_zk] class-name="kafka_zk" cluster="local" servers=[ "zookeeper:2181" ] zookeeper-path="/local" zookeeper-timeout=30 group-denylist="^(console-consumer-|python-kafka-consumer-).*$" group-allowlist="" [httpserver.default] address=":8000"

Once the configurations are in place simply run docker-compose up.

Once the docker images get downloaded, you should see 3 containers running.

$ sudo docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 31564cfa6bea burrow_burrow "/app/burrow --confi…" 20 hours ago Up 3 seconds 0.0.0.0:8000->8000/tcp burrow_burrow_1 1e1e15db8f50 wurstmeister/zookeeper "/bin/sh -c '/usr/sb…" 20 hours ago Up 55 seconds 22/tcp, 2888/tcp, 3888/tcp, 0.0.0.0:2181->2181/tcp burrow_zookeeper_1 167ab303ad51 wurstmeister/kafka "start-kafka.sh" 20 hours ago Up 38 seconds 0.0.0.0:9092->9092/tcp burrow_kafka_1

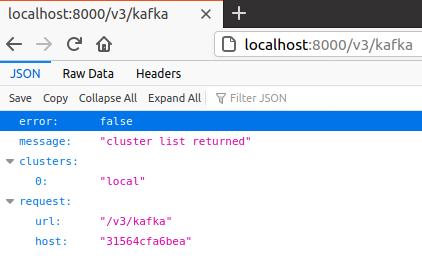

To verify if Burrow is working, use the url – http://localhost:8000/v3/kafka on your browser. You will see the cluster name which was configured in docker-compose.yml.

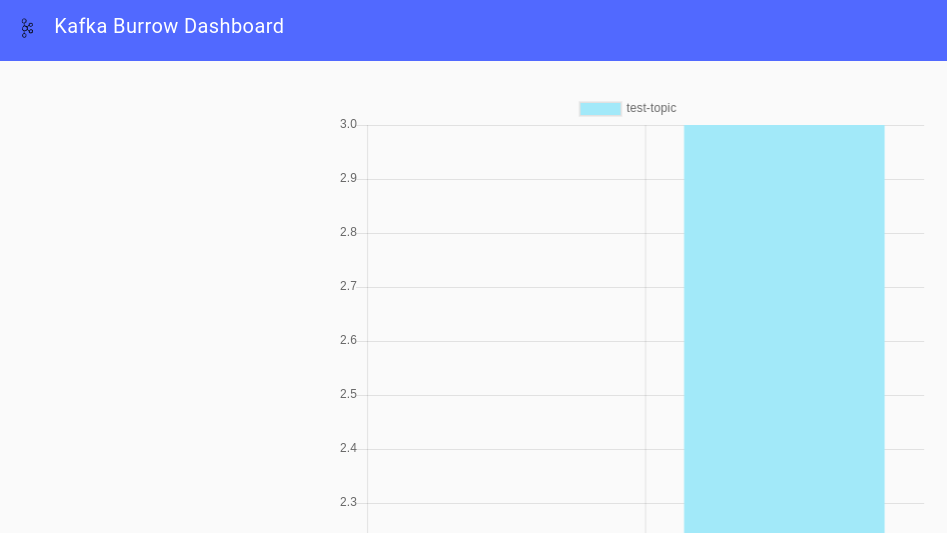

#2. Setup Burrow Dashboard

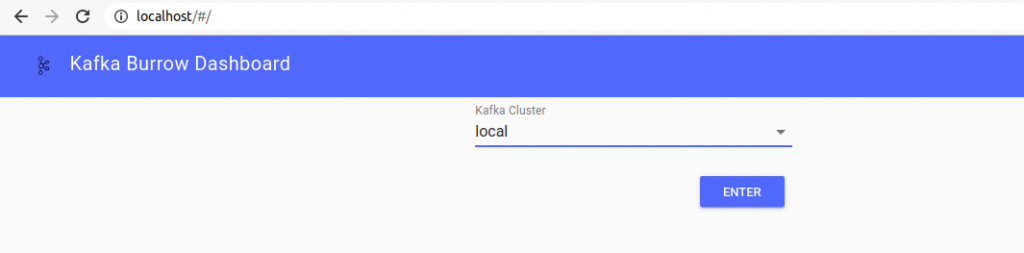

The easiest way to get started with the Dashboard is to use the docker image. Check out the Git page as well.

sudo docker pull joway/burrow-dashboard

To run the dashboard, use the below command.

sudo docker run --network host -e BURROW_BACKEND=http://localhost:8000 -d -p 80:80 joway/burrow-dashboard:latest

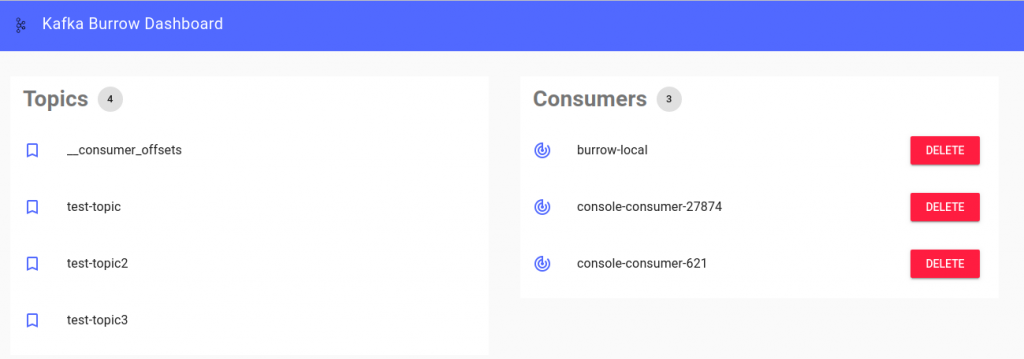

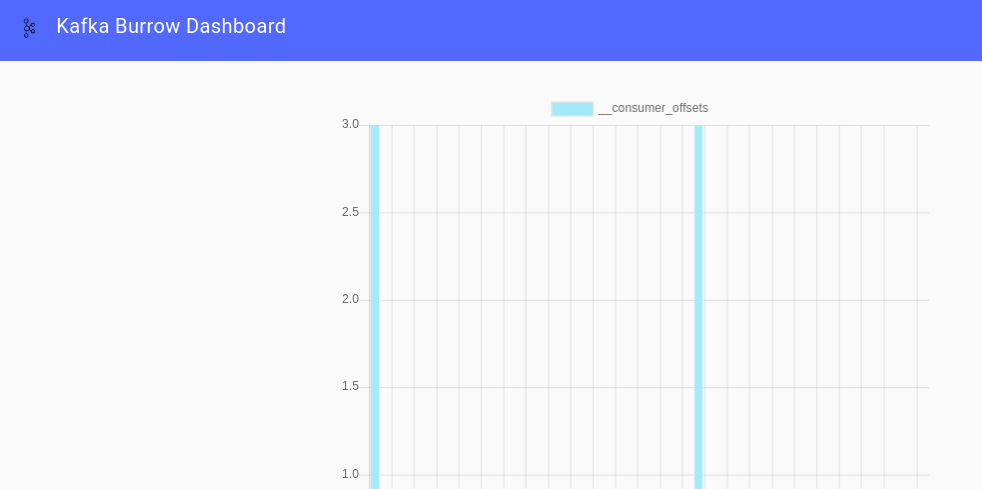

Once the dashboard start running, you can check out the dashboard on http://localhost. To test, it you can simply put some random data into the topic. You can run the kafka-console-producer by going into the container bash.

$ sudo docker exec -it <container id> bash $ $ cd /opt/kafka_2.13-2.6.0/bin $ $ ./kafka-console-producer.sh --topic test-topic --bootstrap-server localhost:9092 >test >test1 >test2 >test3 >test4 $ $ ./kafka-console-consumer.sh --topic test-topic --bootstrap-server localhost:9092 --from-beginning test test1 test2 test3 test4

That’s it for this tutorial. Stay tuned for more.