Kafka is one of the most popular event streaming platform in the world. The Confluent Control Center not only provides an easy way to create topics but it also provides real time monitoring of Kafka clusters and other components.

Below is a step by step guide on how to setup Confluent Control Center.

#1. Download Confluent Platform

The first thing that you need to do is download the Confluent tar ball. This includes everything from Kafka, ksqlDB, Schema Registry, Control Center etc.

Confluent also provides Cloud Service on Azure, GCP & AWS. However, for this purpose, I have used the manual installer on my local Ubuntu Desktop.

#2. Create ZooKeeper/Kafka Cluster

Since we’re using the Confluent platform, let’s setup a ZooKeeper and Kafka cluster which will enable us to use the Control Center. The control center is part of enterprise license which has a trial period of 30 days.

If you want to create a zookeeper/kafka cluster in a single host, then you will have to change the ports. If you’re looking to create 3 nodes for zookeeper/kafka then you will need to have 3 separate zookeeper.properties & server.properties.

ZooKeeper.properties would look something like below. The dataDir and clientPort parameters would need to be changed based on the configuration.

dataDir=/apps/Confluent/confluent-6.1.0/zookeeper/z1 clientPort=2181 tickTime=2000 initLimit=10 syncLimit=5 server.0=localhost:2888:3888 server.1=localhost:2889:3889 server.2=localhost:2890:3890

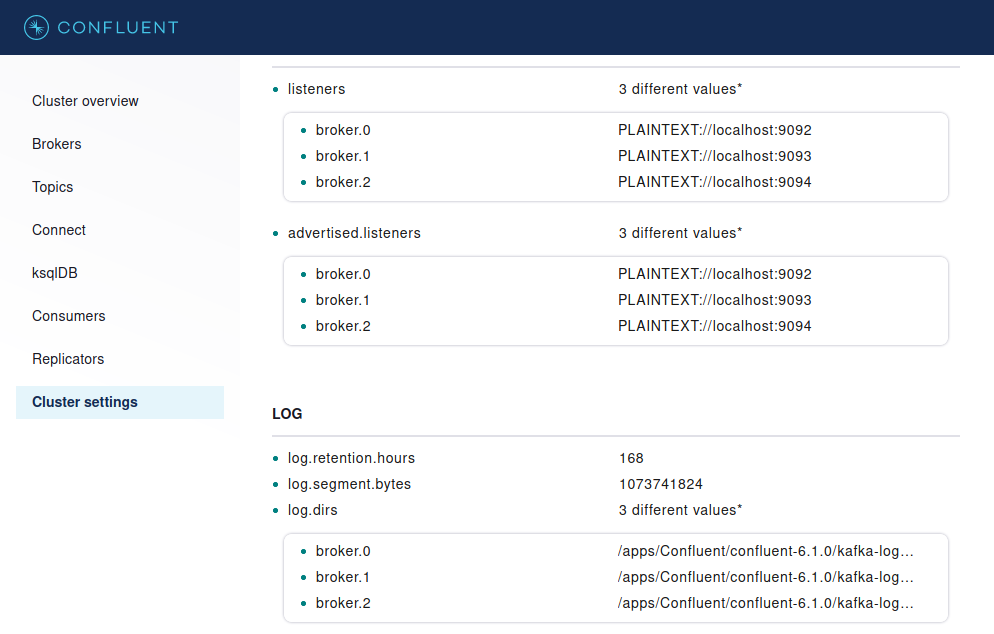

For server.properties, you will need to change a few of the properties for each of the broker.

############################# Server Basics ############################# # The id of the broker. This must be set to a unique integer for each broker. broker.id=0 ############################# Socket Server Settings ############################# listeners=PLAINTEXT://localhost:9092 advertised.listeners=PLAINTEXT://localhost:9092 # A comma separated list of directories under which to store log files log.dirs=/apps/Confluent/confluent-6.1.0/kafka-logs-1 ############################# Zookeeper ############################# # Zookeeper connection string (see zookeeper docs for details). # This is a comma separated host:port pairs, each corresponding to a zk # server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002". # You can also append an optional chroot string to the urls to specify the # root directory for all kafka znodes. zookeeper.connect=localhost:2181,localhost:2182,localhost:2183 ##################### Confluent Metrics Reporter ####################### # Confluent Control Center and Confluent Auto Data Balancer integration # # Uncomment the following lines to publish monitoring data for # Confluent Control Center and Confluent Auto Data Balancer # If you are using a dedicated metrics cluster, also adjust the settings # to point to your metrics kakfa cluster. metric.reporters=io.confluent.metrics.reporter.ConfluentMetricsReporter confluent.metrics.reporter.bootstrap.servers=localhost:9092

Enabling the metric reporters enables the metric related data of Kafka brokers for the Control Center.

Below are the commands to start ZooKeeper & Kafka.

$ bin/zookeeper-server-start etc/kafka/zookeeper.properties & $ bin/kafka-server-start etc/kafka/server.properties &

Verify if both ZooKeeper & Kafka processes are running.

$ ps -ef | grep kafka root 13293 6758 1 20:42 ? 00:00:19 java -Xmx512M -Xms512M -server -XX:+UseG1GC -XX:MaxGCPauseMillis=20 -XX:InitiatingHeapOccupancyPercent=35 -XX:+ExplicitGCInvokesConcurrent -XX:MaxInlineLevel=15 -Djava.awt.headless=true -Xlog:gc*:file=/apps/Confluent/confluent-6.1.0/bin/../logs/zookeeper-gc.log:time,tags:filecount=10,filesize=100M -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dkafka.logs.dir=/apps/Confluent/confluent-6.1.0/bin/../logs -Dlog4j.configuration=file:bin/../etc/kafka/log4j.properties -cp /apps/Confluent/confluent-6.1.0/bin/../ce-broker-plugins/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-broker-plugins/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-auth-providers/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-auth-providers/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-rest-server/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-rest-server/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-audit/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-audit/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/kafka/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-metadata-service/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/rest-utils/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-common/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/ce-kafka-http-server/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/ce-kafka-rest-servlet/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/ce-kafka-rest-extensions/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/kafka-rest-lib/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-security/kafka-rest/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-security/schema-validator/*:/apps/Confluent/confluent-6.1.0/bin/../support-metrics-client/build/dependant-libs-2.13.3/*:/apps/Confluent/confluent-6.1.0/bin/../support-metrics-client/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-telemetry/*:/usr/share/java/support-metrics-client/* org.apache.zookeeper.server.quorum.QuorumPeerMain etc/kafka/zookeeper.properties root 22145 6758 49 20:48 ? 00:06:29 java -Xmx1G -Xms1G -server -XX:+UseG1GC -XX:MaxGCPauseMillis=20 -XX:InitiatingHeapOccupancyPercent=35 -XX:+ExplicitGCInvokesConcurrent -XX:MaxInlineLevel=15 -Djava.awt.headless=true -Xlog:gc*:file=/apps/Confluent/confluent-6.1.0/bin/../logs/kafkaServer-gc.log:time,tags:filecount=10,filesize=100M -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dkafka.logs.dir=/apps/Confluent/confluent-6.1.0/bin/../logs -Dlog4j.configuration=file:bin/../etc/kafka/log4j.properties -cp /apps/Confluent/confluent-6.1.0/bin/../ce-broker-plugins/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-broker-plugins/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-auth-providers/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-auth-providers/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-rest-server/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-rest-server/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-audit/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../ce-audit/build/dependant-libs/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/kafka/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-metadata-service/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/rest-utils/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-common/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/ce-kafka-http-server/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/ce-kafka-rest-servlet/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/ce-kafka-rest-extensions/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/kafka-rest-lib/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-security/kafka-rest/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-security/schema-validator/*:/apps/Confluent/confluent-6.1.0/bin/../support-metrics-client/build/dependant-libs-2.13.3/*:/apps/Confluent/confluent-6.1.0/bin/../support-metrics-client/build/libs/*:/apps/Confluent/confluent-6.1.0/bin/../share/java/confluent-telemetry/*:/usr/share/java/support-metrics-client/* kafka.Kafka etc/kafka/server.properties

To confirm if all the brokers are running, run the below command. It will provide all the broker ids.

$ bin/zookeeper-shell localhost:2181 ls /brokers/ids Connecting to localhost:2181 WATCHER:: WatchedEvent state:SyncConnected type:None path:null [0, 1, 2] $ bin/zookeeper-shell localhost:2182 ls /brokers/ids Connecting to localhost:2182 WATCHER:: WatchedEvent state:SyncConnected type:None path:null [0, 1, 2] $ bin/zookeeper-shell localhost:2183 ls /brokers/ids Connecting to localhost:2183 WATCHER:: WatchedEvent state:SyncConnected type:None path:null [0, 1, 2]

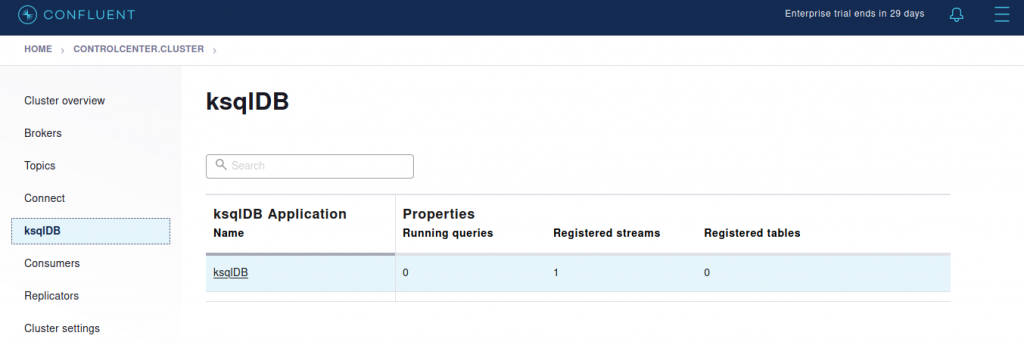

#3. Start ksqlDB server

If you’re looking to create a stream processing application, using ksqlDB is one of the easiest ways to process streams in real time.

$ bin/ksql-server-start etc/ksqldb/ksql-server.properties &

Once the ksqlDB server has started, you will be able to login to the ksql prompt. The ksqlDB server details also appear in the Confluent Control Center.

$ bin/ksql

OpenJDK 64-Bit Server VM warning: Option UseConcMarkSweepGC was deprecated in version 9.0 and will likely be removed in a future release.

===========================================

= _ _ ____ ____ =

= | | _____ __ _| | _ \| __ ) =

= | |/ / __|/ _` | | | | | _ \ =

= | <\__ \ (_| | | |_| | |_) | =

= |_|\_\___/\__, |_|____/|____/ =

= |_| =

= Event Streaming Database purpose-built =

= for stream processing apps =

===========================================

Copyright 2017-2020 Confluent Inc.

CLI v6.1.0, Server v6.1.0 located at http://localhost:8088

Server Status: RUNNING

Having trouble? Type 'help' (case-insensitive) for a rundown of how things work!

ksql>

#4. Start Confluent Control Center

Before you start the control center, make sure to add the ZooKeeper & Kafka details in control-center.properties.

############################# Server Basics ############################# # A comma separated list of Apache Kafka cluster host names (required) # NOTE: should not be localhost bootstrap.servers=localhost:9092,localhost:9093,localhost:9094 # A comma separated list of ZooKeeper host names (for ACLs) zookeeper.connect=localhost:2181,localhost:2182,localhost:2183 # KSQL cluster URL confluent.controlcenter.ksql.ksqlDB.url=http://localhost:8088

Use the below command to start the Confluent Control Center.

$ bin/control-center-start etc/confluent-control-center/control-center.properties &

Once the service starts running, go to http://localhost:9021.

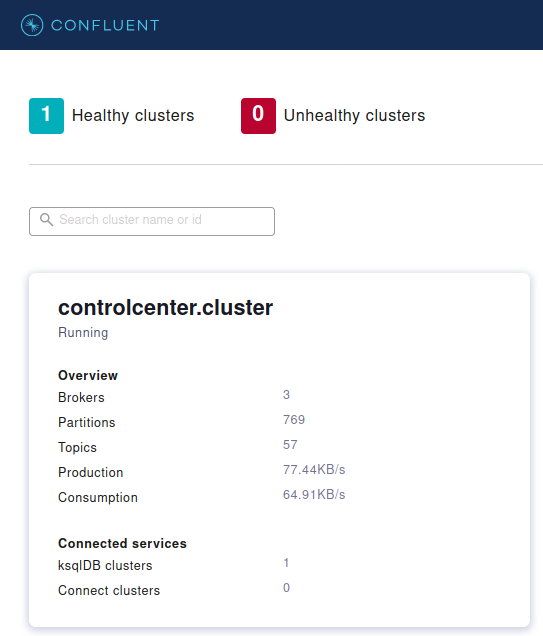

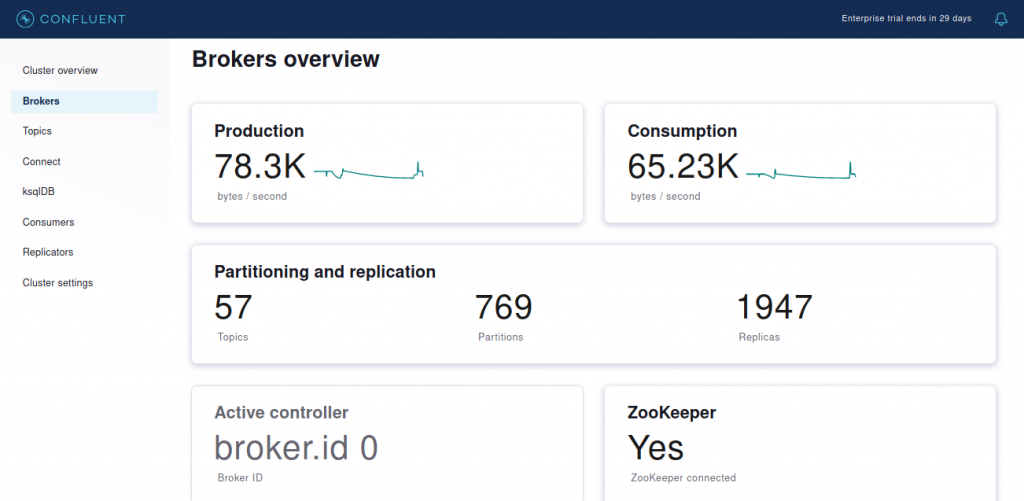

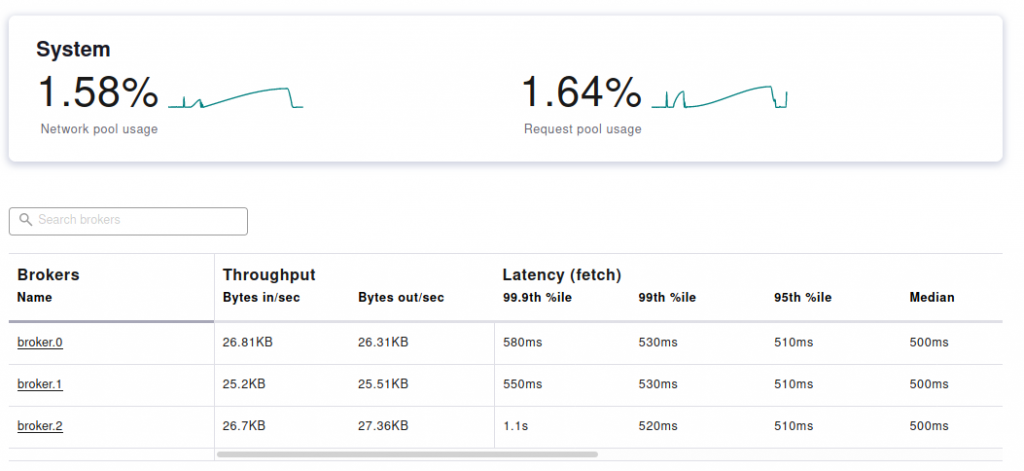

The Confluent Control Center provides real time monitoring Kafka Brokers and data processing details.

Now that the control center is up and running, you should be able to manage and monitor Kafka.

So, that’s it for this tutorial. Stay tuned for more.